starwhale

一体化机器学习运维平台 加速AI模型开发流程

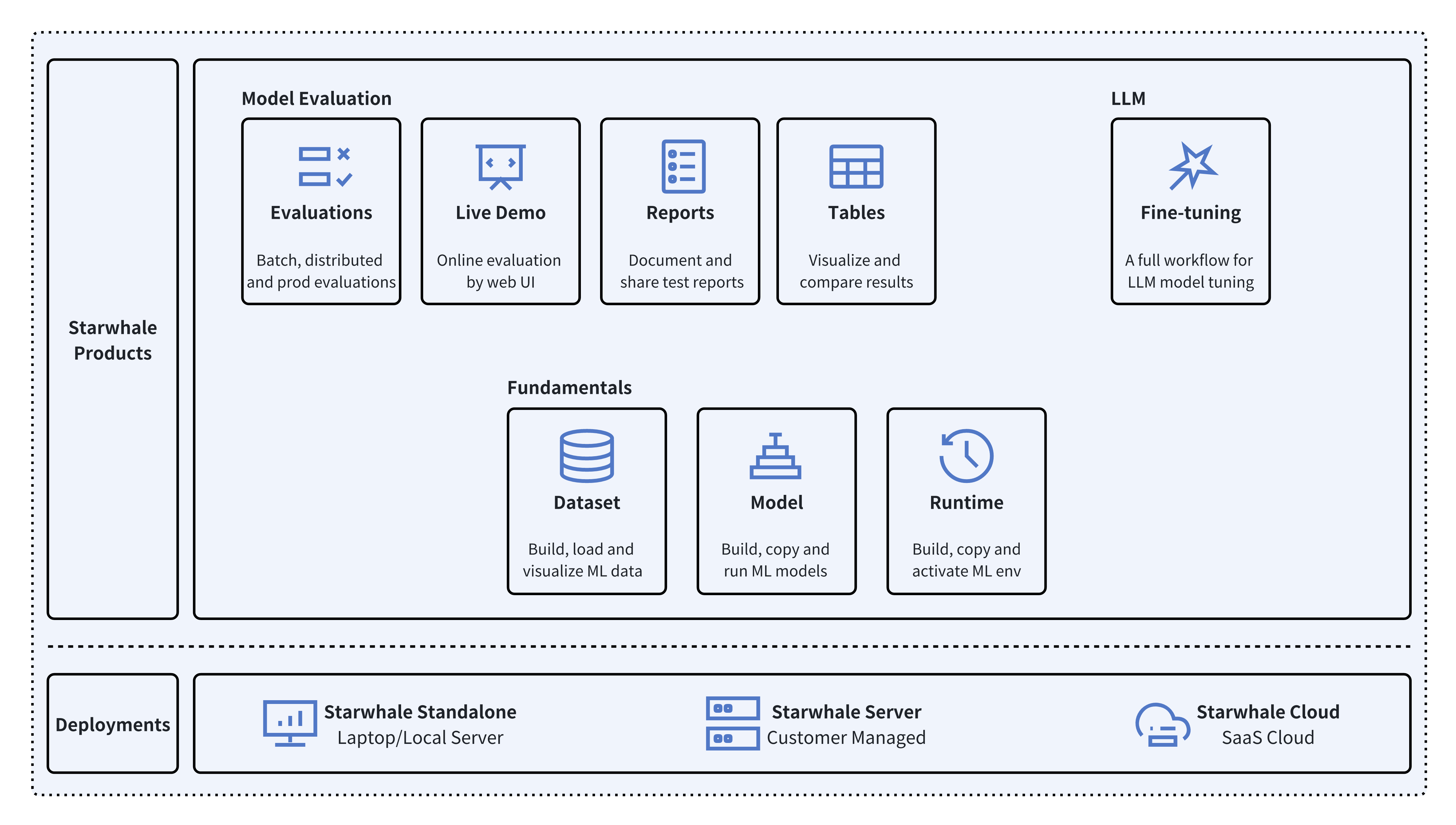

Starwhale是一个开源的MLOps/LLMOps平台,致力于优化机器学习运维流程。平台提供模型、运行时和数据集的统一管理,支持模型评估、在线演示和大语言模型微调等功能。Starwhale支持独立版、服务器版和云端版部署,适应不同应用场景。其开放架构允许开发者自定义MLOps功能,为AI团队打造高效、标准化的开发环境。

🚀 ️☁️ Starwhale Cloud is now open to the public, try it! 🎉🍻

</div> <p align="center"> <a href="https://pypi.org/project/starwhale/"> <img src="https://img.shields.io/pypi/v/starwhale?style=flat"> </a> <a href='https://artifacthub.io/packages/helm/starwhale/starwhale'> <img src='https://img.shields.io/endpoint?url=https://artifacthub.io/badge/repository/starwhale' alt='Artifact Hub'/> </a> <a href="https://pypi.org/project/starwhale/"> <img alt="PyPI - Python Version" src="https://img.shields.io/pypi/pyversions/starwhale"> </a> <a href="https://github.com/star-whale/starwhale/actions/workflows/client.yml"> <img src="https://github.com/star-whale/starwhale/actions/workflows/client.yml/badge.svg" alt="Client/SDK UT"> </a> <a href="https://github.com/star-whale/starwhale/actions/workflows/server-ut-report.yml"> <img src="https://github.com/star-whale/starwhale/actions/workflows/server-ut-report.yml/badge.svg" alt="Server UT"> </a> <a href="https://github.com/star-whale/starwhale/actions/workflows/console.yml"> <img src="https://github.com/star-whale/starwhale/actions/workflows/console.yml/badge.svg"> </a> <a href="https://github.com/star-whale/starwhale/actions/workflows/e2e-test.yml"> <img src='https://github.com/star-whale/starwhale/actions/workflows/e2e-test.yml/badge.svg' alt='Starwhale E2E Test'> </a> <a href='https://app.codecov.io/gh/star-whale/starwhale'> <img alt="Codecov" src="https://img.shields.io/codecov/c/github/star-whale/starwhale?flag=controller&label=Java%20Cov"> </a> <a href="https://app.codecov.io/gh/star-whale/starwhale"> <img alt="Codecov" src="https://img.shields.io/codecov/c/github/star-whale/starwhale?flag=standalone&label=Python%20cov"> </a> </p> <h4 align="center"> <p> <b>English</b> | <a href="https://github.com/star-whale/starwhale/blob/main/README_ZH.md">中文</a> <p> </h4>What is Starwhale

Starwhale is an MLOps/LLMOps platform that brings efficiency and standardization to machine learning operations. It streamlines the model development liftcycle, enabling teams to optimize their workflows around key areas like model building, evaluation, release and fine-tuning.

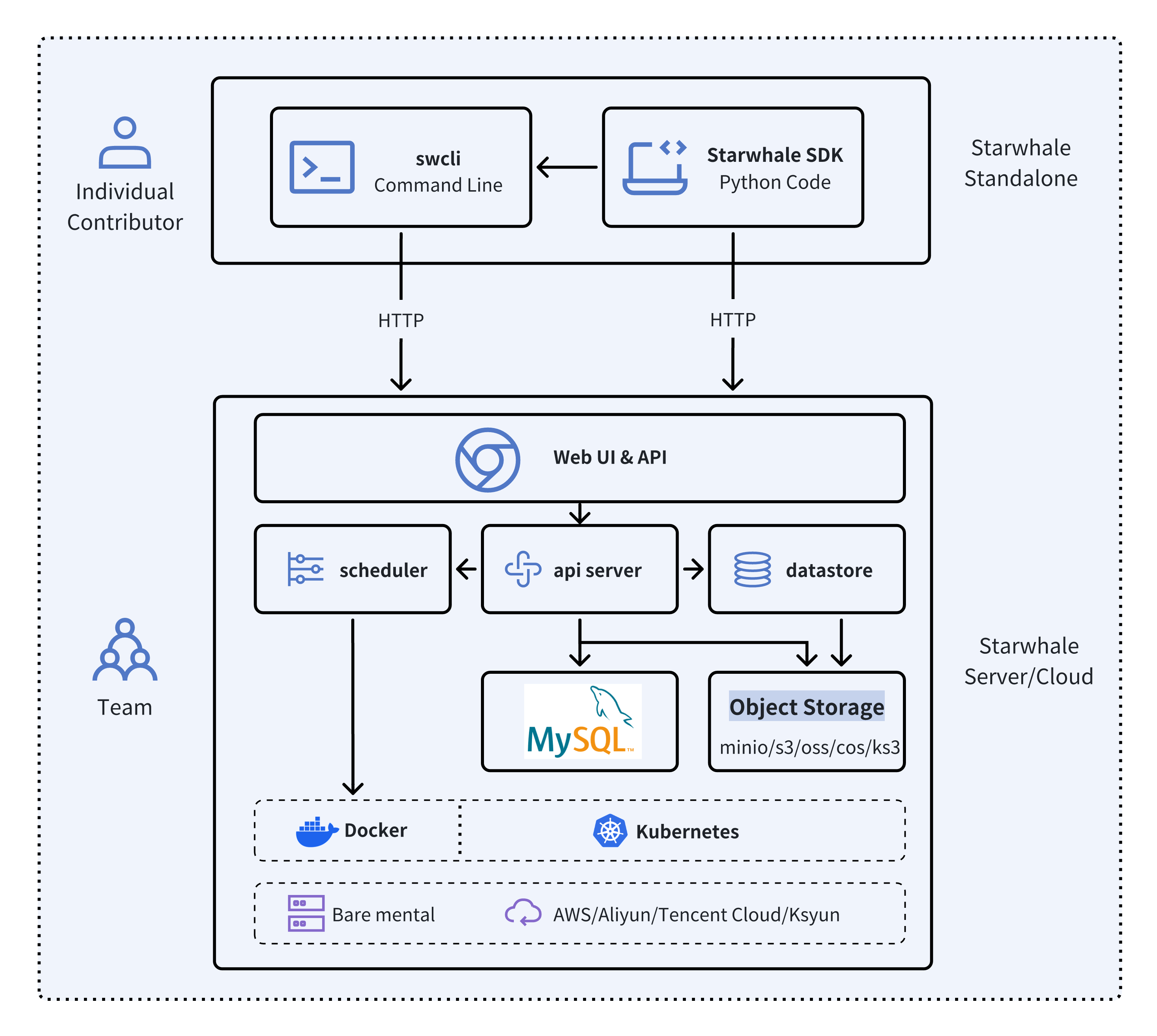

Starwhale meets diverse deployment needs with three flexible configurations:

- 🐥 Standalone - Deployed in a local development environment, managed by the

swclicommand-line tool, meeting development and debugging needs. - 🦅 Server - Deployed in a private data center, relying on a Kubernetes cluster, providing centralized, web-based, and secure services.

- 🦉 Cloud - Hosted on a public cloud, with the access address https://cloud.starwhale.cn. The Starwhale team is responsible for maintenance, and no installation is required. You can start using it after registering an account.

As its core, Starwhale abstracts Model, Runtime and Dataset as first-class citizens - providing the fundamentals for streamlined operations. Starwhale further delivers tailored capabilities for common workflow scenarios including:

- 🔥 Models Evaluation - Implement robust, production-scale evaluations with minimal coding through the Python SDK.

- 🌟 Live Demo - Interactively assess model performance through user-friendly web interfaces.

- 🌊 LLM Fine-tuning - End-to-end toolchain from efficient fine-tuning to comparative benchmarking and publishing.

Starwhale is also an open source platform, using the Apache-2.0 license. The Starwhale framework is designed for clarity and ease of use, empowering developers to build customized MLOps features tailored to their needs.

Key Concepts

🐘 Starwhale Dataset

Starwhale Dataset offers efficient data storage, loading, and visualization capabilities, making it a dedicated data management tool tailored for the field of machine learning and deep learning

import torch from starwhale import dataset, Image # build dataset for starwhale cloud instance with dataset("https://cloud.starwhale.cn/project/starwhale:public/dataset/test-image", create="empty") as ds: for i in range(100): ds.append({"image": Image(f"{i}.png"), "label": i}) ds.commit() # load dataset ds = dataset("https://cloud.starwhale.cn/project/starwhale:public/dataset/test-image") print(len(ds)) print(ds[0].features.image.to_pil()) print(ds[0].features.label) torch_ds = ds.to_pytorch() torch_loader = torch.utils.data.DataLoader(torch_ds, batch_size=5) print(next(iter(torch_loader)))

🐇 Starwhale Model

Starwhale Model is a standard format for packaging machine learning models that can be used for various purposes, like model fine-tuning, model evaluation, and online serving. A Starwhale Model contains the model file, inference codes, configuration files, and any other files required to run the model.

# model build swcli model build . --module mnist.evaluate --runtime pytorch/version/v1 --name mnist # model copy from standalone to cloud swcli model cp mnist https://cloud.starwhale.cn/project/starwhale:public # model run swcli model run --uri mnist --runtime pytorch --dataset mnist swcli model run --workdir . --module mnist.evaluator --handler mnist.evaluator:MNISTInference.cmp

🐌 Starwhale Runtime

Starwhale Runtime aims to provide a reproducible and sharable running environment for python programs. You can easily share your working environment with your teammates or outsiders, and vice versa. Furthermore, you can run your programs on Starwhale Server or Starwhale Cloud without bothering with the dependencies.

# build from runtime.yaml, conda env, docker image or shell swcli runtime build --yaml runtime.yaml swcli runtime build --conda pytorch --name pytorch-runtime --cuda 11.4 swcli runtime build --docker pytorch/pytorch:1.9.0-cuda11.1-cudnn8-runtime swcli runtime build --shell --name pytorch-runtime # runtime activate swcli runtime activate pytorch # integrated with model and dataset swcli model run --uri test --runtime pytorch swcli model build . --runtime pytorch swcli dataset build --runtime pytorch

🐄 Starwhale Evaluation

Starwhale Evaluation enables users to evaluate sophisticated, production-ready distributed models by writing just a few lines of code with Starwhale Python SDK.

import typing as t import gradio from starwhale import evaluation from starwhale.api.service import api def model_generate(image): ... return predict_value, probability_matrix @evaluation.predict( resources={"nvidia.com/gpu": 1}, replicas=4, ) def predict_image(data: dict, external: dict) -> None: return model_generate(data["image"]) @evaluation.evaluate(use_predict_auto_log=True, needs=[predict_image]) def evaluate_results(predict_result_iter: t.Iterator): for _data in predict_result_iter: ... evaluation.log_summary({"accuracy": 0.95, "benchmark": "test"}) @api(gradio.File(), gradio.Label()) def predict_view(file: t.Any) -> t.Any: with open(file.name, "rb") as f: data = Image(f.read(), shape=(28, 28, 1)) _, prob = predict_image({"image": data}) return {i: p for i, p in enumerate(prob)}

🦍 Starwhale Fine-tuning

Starwhale Fine-tuning provides a full workflow for Large Language Model(LLM) tuning, including batch model evaluation, live demo and model release capabilities. Starwhale Fine-tuning Python SDK is very simple.

import typing as t from starwhale import finetune, Dataset from transformers import Trainer @finetune( resources={"nvidia.com/gpu":4, "memory": "32G"}, require_train_datasets=True, require_validation_datasets=True, model_modules=["evaluation", "finetune"], ) def lora_finetune(train_datasets: t.List[Dataset], val_datasets: t.List[Dataset]) -> None: # init model and tokenizer trainer = Trainer( model=model, tokenizer=tokenizer, train_dataset=train_datasets[0].to_pytorch(), # convert Starwhale Dataset into Pytorch Dataset eval_dataset=val_datasets[0].to_pytorch()) trainer.train() trainer.save_state() trainer.save_model() # save weights, then Starwhale SDK will package them into Starwhale Model

Installation

🍉 Starwhale Standalone

Requirements: Python 3.7~3.11 in the Linux or macOS os.

python3 -m pip install starwhale

🥭 Starwhale Server

Starwhale Server is delivered as a Docker image, which can be run with Docker directly or deployed to a Kubernetes cluster. For the laptop environment, using swcli server start command is a appropriate choice that depends on Docker and Docker-Compose.

swcli server start

Quick Tour

We use MNIST as the hello world example to show the basic Starwhale Model workflow.

🪅 MNIST Evaluation in Starwhale Standalone

- Use your own Python environment, follow the Standalone quickstart doc.

- Use Google Colab environment, follow the Jupyter notebook example.

🪆 MNIST Evaluation in Starwhale Server

- Run it in the your private Starwhale Server instance, please read Server installation(minikube) and Server quickstart docs.

- Run it in the Starwhale Cloud, please read Cloud quickstart doc.

Examples

-

🚀 LLM:

- 🐊 OpenSource LLMs Leaderboard: Evaluation, Code

- 🐢 Llama2: Run llama2 chat in five minutes, Code

- 🦎 Stable Diffusion: Cloud Demo, Code

- 🦙 LLAMA evaluation and fine-tune

- 🎹 Text-to-Music: Cloud Demo, Code

- 🍏 Code Generation: Cloud Demo, Code

-

🌋 Fine-tuning:

- 🐏 Baichuan2: Cloud Demo, Code

- 🐫 ChatGLM3: Cloud Demo, Code

- 🦏 Stable Diffusion: Cloud Demo, Code

-

🦦 Image Classification:

- 🐻❄️ MNIST: Cloud Demo, Code.

- 🦫 CIFAR10

- 🦓 Vision Transformer(ViT): Cloud Demo, Code

-

🐃 Image Segmentation:

- Segment Anything(SAM): Cloud Demo, Code

-

🐦 Object Detection:

- 🦊 YOLO: Cloud Demo, Code

- 🐯 Pedestrian Detection

-

📽️ Video Recognition: UCF101

-

🦋 Machine Translation: Neural machine translation

-

🐜 Text Classification: AG News

-

🎙️ Speech Recognition: Speech Command

Documentation, Community, and Support

-

Visit Starwhale HomePage.

-

More information in the official documentation.

-

For general questions and support, join the Slack.

-

For bug reports and feature requests, please use Github Issue.

-

To get community updates, follow @starwhaleai on Twitter.

-

For Starwhale artifacts, please visit:

- Python Package on Pypi.

- Helm Charts on Artifacthub.

- Docker Images on Docker Hub, Github Packages and Starwhale Registry.

-

Additionally, you can always find us at developer@starwhale.ai.

Contributing

🌼👏PRs are always welcomed 👍🍺. See Contribution to Starwhale for more details.

License

Starwhale is licensed under the [Apache License

编辑推荐精选

音述AI

全球首个AI音乐社区

音述AI是全球首个AI音乐社区,致力让每个人都能用音乐表达自我。音述AI提供零门槛AI创作工具,独创GETI法则帮助用户精准定义音乐风格,AI润色功能支持自动优化作品质感。音述AI支持交流讨论、二次创作与价值变现。针对中文用户的语言习惯与文化背景进行专门优化,支持国风融合、C-pop等本土音乐标签,让技术更好地承载人文表达。

lynote.ai

一站式搞定所有学习需求

不再被海量信息淹没,开始真正理解知识。Lynote 可摘要 YouTube 视频、PDF、文章等内容。即时创建笔记,检测 AI 内容并下载资料,将您的学习效率提升 10 倍。

AniShort

为AI短剧协作而生

专为AI短剧协作而生的AniShort正式发布,深度重构AI短剧全流程生产模式,整合创意策划、制作执行、实时协作、在线审片、资产复用等全链路功�能,独创无限画布、双轨并行工业化工作流与Ani智能体助手,集成多款主流AI大模型,破解素材零散、版本混乱、沟通低效等行业痛点,助力3人团队效率提升800%,打造标准化、可追溯的AI短剧量产体系,是AI短剧团队协同创作、提升制作效率的核心工具。

seedancetwo2.0

能听懂你表达的视频模型

Seedance two是基于seedance2.0的中国大模型,支持图像、视频、音频、文本四种模态输入,表达方式更丰富,生成也更可控。

nano-banana纳米香蕉中文站

国内直接访问,限时3折

输入简单文字,生成想要的图片,纳米香蕉中文站基于 Google 模型的 AI 图片生成网站,支持文字生图、图生图。官网价格限时3折活动

扣子-AI办公

职场AI,就用扣子

AI办公助手,复杂任务高效处理。办公效率低?扣子空间AI助手支持播客生成、PPT制作、网页开发及报告写作,覆盖科研、商业、舆情等领域的专家Agent 7x24小时响应,生活工作无缝切换,提升50%效率!

堆友

多风格AI绘画神器

堆友平台由阿里巴巴设计团队创建,作为一款AI驱动的设计工具,专为设计师提供一站式增长服务。功能覆盖海量3D素材、AI绘画、实时渲染以及专业抠图,显著提升设计品质和��效率。平台不仅提供工具,还是一个促进创意交流和个人发展的空间,界面友好,适合所有级别的设计师和创意工作者。

码上飞

零代码AI应用开发平台

零代码AI应用开发平台,用户只需一句话简单描述需求,AI能自动生成小程序、APP或H5网页应用,无需编写代码。

Vora

免费创建高清无水印Sora视频

Vora是一个免费创建高清无水印Sora视频的AI工具

Refly.AI

最适合小白的AI自动化工作流平台

无需编码,轻松生成可复用、可变现的AI自动化工作流

推荐工具精选

AI云服务特惠

懂AI专属折扣关注微信公众号

最新AI工具、AI资讯

独家AI资源、AI项目落地

微信扫一扫关注公众号